How to spot AI-generated deepfakes in 2026 A complete guide

Table of Contents

- Introduction to Deepfakes

- Understanding AI-Generated Deepfakes

- Visual and Audio Signs of Deepfakes

- Real-World Examples of Deepfakes

- Legislative Efforts to Combat Deepfakes

- Best Practices for Identifying Deepfakes

Introduction to Deepfakes

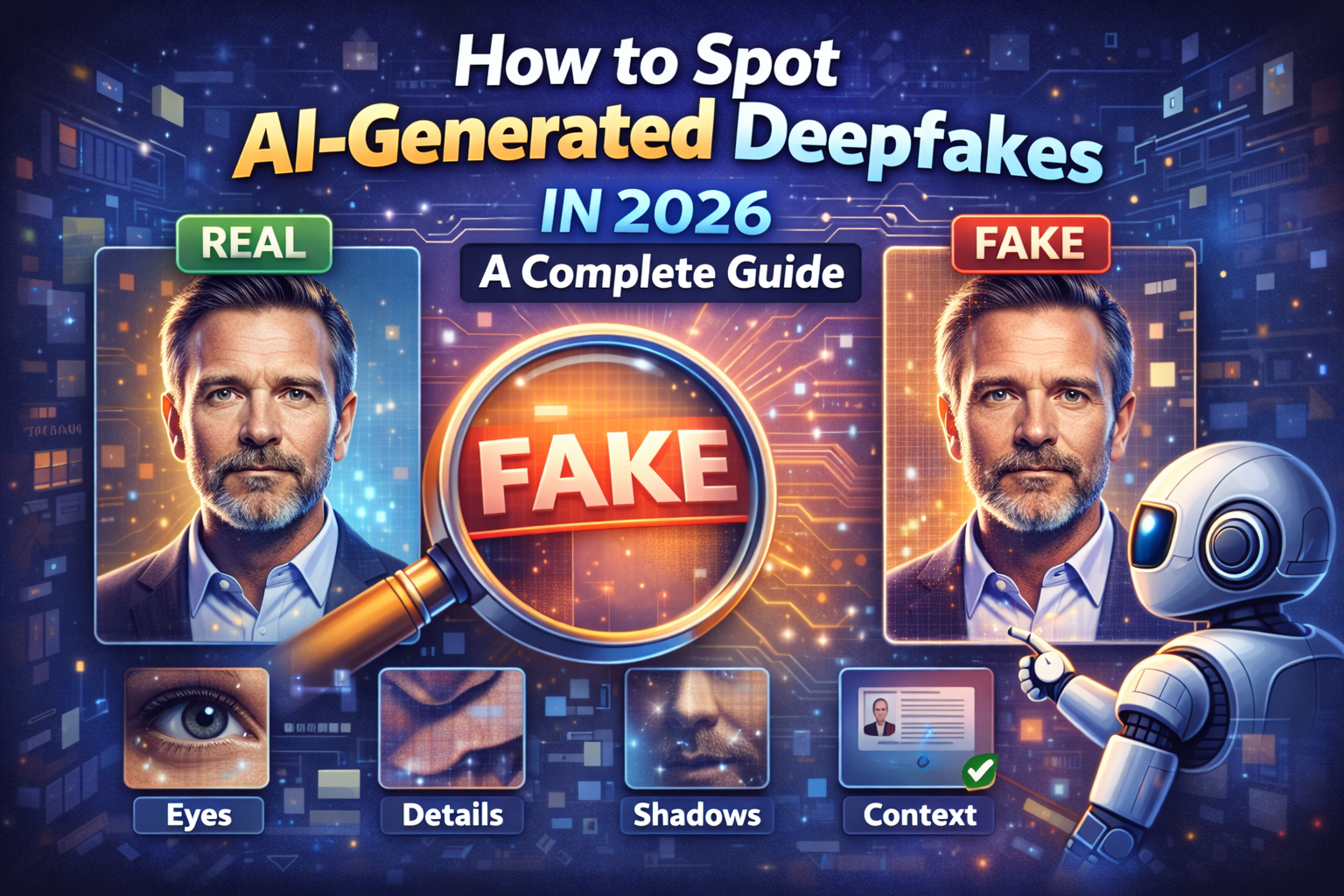

Deepfakes are AI-generated videos, images, or audio recordings that are designed to mimic the appearance and sound of real individuals. They have become increasingly sophisticated, making it challenging to distinguish between genuine and fake content. The rise of deepfakes has significant implications for various industries, including entertainment, politics, and cybersecurity.

Understanding AI-Generated Deepfakes

AI-generated deepfakes use machine learning algorithms to analyze and replicate the patterns and characteristics of a person's voice, face, or movements. These algorithms can be trained on large datasets, allowing them to generate highly realistic content. However, AI-generated deepfakes often struggle to capture the subtleties of human behavior, such as eye movements, blinking, and teeth alignment.

Visual and Audio Signs of Deepfakes

There are several visual and audio signs that can indicate a deepfake. These include teeth that change shape or look "too perfect," unnatural blinking or eye flickering, and inconsistent or robotic body language. Audio signs of deepfakes may include inconsistent or distorted sound quality, lip movements that do not match the audio, and an overall "uncanny valley" effect that makes the content feel unnatural.

Real-World Examples of Deepfakes

Deepfakes have been used in various contexts, including entertainment, politics, and social media. For example, a deepfake video of a CEO can be used to manipulate financial transactions or pressure employees into taking urgent actions. Deepfakes can also be used to spread misinformation or propaganda, making it essential to develop effective methods for identifying and mitigating them.

Legislative Efforts to Combat Deepfakes

Several countries and organizations are working to develop legislation and regulations to combat the spread of deepfakes. For example, the EU's AI Act aims to establish a framework for the development and deployment of AI systems, including those used to generate deepfakes. In the United States, lawmakers are considering legislation that would require social media platforms to label AI-generated content.

Best Practices for Identifying Deepfakes

To identify deepfakes, it is essential to be aware of the visual and audio signs mentioned earlier. Additionally, individuals can use fact-checking websites and tools to verify the authenticity of content. It is also crucial to be cautious when sharing or engaging with online content, especially if it seems too good (or bad) to be true.

What are the most common signs of a deepfake?

The most common signs of a deepfake include teeth that change shape or look "too perfect," unnatural blinking or eye flickering, and inconsistent or robotic body language. Audio signs of deepfakes may include inconsistent or distorted sound quality, lip movements that do not match the audio, and an overall "uncanny valley" effect that makes the content feel unnatural.

How can I protect myself from deepfakes?

To protect yourself from deepfakes, it is essential to be aware of the visual and audio signs mentioned earlier. Additionally, individuals can use fact-checking websites and tools to verify the authenticity of content. It is also crucial to be cautious when sharing or engaging with online content, especially if it seems too good (or bad) to be true.

What are the implications of deepfakes for cybersecurity?

The implications of deepfakes for cybersecurity are significant. Deepfakes can be used to manipulate individuals into taking actions that compromise their personal or financial security. They can also be used to spread misinformation or propaganda, making it essential to develop effective methods for identifying and mitigating them.

How can I report a deepfake?

If you suspect that a piece of content is a deepfake, you can report it to the platform or website where it is hosted. Many social media platforms have dedicated teams that review and remove AI-generated content. You can also report deepfakes to fact-checking organizations or law enforcement agencies, depending on the context and severity of the content.

What is the future of deepfakes?

The future of deepfakes is uncertain, but it is likely that they will continue to evolve and become more sophisticated. As AI technology advances, it is essential to develop effective methods for identifying and mitigating deepfakes. This may involve the development of new legislation, regulations, and technologies that can detect and prevent the spread of AI-generated content.

Frequently Asked Questions

What are the most common signs of a deepfake?

The most common signs of a deepfake include teeth that change shape or look "too perfect," unnatural blinking or eye flickering, and inconsistent or robotic body language. Audio signs of deepfakes may include inconsistent or distorted sound quality, lip movements that do not match the audio, and an overall "uncanny valley" effect that makes the content feel unnatural.

How can I protect myself from deepfakes?

To protect yourself from deepfakes, it is essential to be aware of the visual and audio signs mentioned earlier. Additionally, individuals can use fact-checking websites and tools to verify the authenticity of content. It is also crucial to be cautious when sharing or engaging with online content, especially if it seems too good (or bad) to be true.

What are the implications of deepfakes for cybersecurity?

The implications of deepfakes for cybersecurity are significant. Deepfakes can be used to manipulate individuals into taking actions that compromise their personal or financial security. They can also be used to spread misinformation or propaganda, making it essential to develop effective methods for identifying and mitigating them.

How can I report a deepfake?

If you suspect that a piece of content is a deepfake, you can report it to the platform or website where it is hosted. Many social media platforms have dedicated teams that review and remove AI-generated content. You can also report deepfakes to fact-checking organizations or law enforcement agencies, depending on the context and severity of the content.

What is the future of deepfakes?

The future of deepfakes is uncertain, but it is likely that they will continue to evolve and become more sophisticated. As AI technology advances, it is essential to develop effective methods for identifying and mitigating deepfakes. This may involve the development of new legislation, regulations, and technologies that can detect and prevent the spread of AI-generated content.